Bret Battey blogging sundry ideas, favorable events, works in progress, and miscellaneous solutions in digital music and video-music research and creation. I see this as a subsidiary of my web site, BatHatMedia.com.

Wednesday, November 20, 2019

MADATAC Screening, 18 Dec 2019, Venice

MADATAC's Director Lury Lech will present the festival's 2019 awarded works in a screening and talk at Auditorium Santa Margherita, University of Venice on Dec 18, 2019. This will include Estuaries 3. More information is available at https://www.unive.it/data/33113/2/34689

Tuesday, November 12, 2019

New Installation: 'Three Breaths in Empty Space' – Nov 19 - Dec 31 2019

Three Breaths in Empty Space is my new multi-channel sound and video installation, commissioned by Phoenix Cinema, Leicester, to celebrate the 10th Anniversary of their move to the Cultural Quarter. It will run mid-November to the end of December, filling the Phoenix Gallery with abstract computer animations and surround sound generated with custom software systems. The work invites participants to contemplate continuous change and shimmering instabilities in everything from subatomic activity to the level of the cosmos. Are we witnessing quantum foam on invisible waves, nerve patterns in the body-mind, maps of social structures coalescing and transforming, or transfigurations of some vast nebula? As ghostly fragments of Maurice Ravel’s piano work Ondine occasionally materialise and dissolve at peaks of audiovisual intensity, we can ponder how phenomena arise and pass in Emptiness.

Thursday, August 29, 2019

Using MIDI and Max to Generate Keyframes for Apple Motion

There may not be anyone else on the planet (current population 7.7 billion) who wants to do this, but in case you are out there, here is a kludge of a solution...

For my Estuaries series of audiovisual compositions, I in some cases used data from my Nodewebba software and Max not only to control music but also to control the visuals, which I was authoring in Apple Motion (v. 5.3).

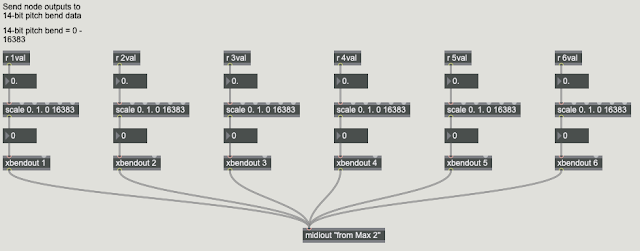

I did this by capturing the needed data from Nodwebba as 14-bit pitch-bend data into the sequencer where I was authoring/editing the music. The picture below shows Max code taking the six floating-point outputs of the Nodewebba nodes, scaling it to the range of 14-bit pitch bend, and sending it out via the "from Max 2" port, with a different MIDI channel for each stream of data. My MIDI sequencer, with transport locked to control Nodewebba, received the data and recorded it on six separate tracks.

I then wrote Max code that would receive this MIDI data, scale the 16-bit pitch bend values to desired values for Motion keyframes, and write a text file containing Motion XML keyframe specifications (as described in the Motion XML File Format document available from the Apple Developer site). Since the keyframes must be given specific time values, the Max code must lock to the sequencer transport and calculate absolute time. This was achieved using a hostsync~ to receive the transport activation. For reasons I don't recall, I ended up using clocker to generate elapsed time rather than trying to calculate elapsed time from hostsync~. This remained sufficiently accurate over short periods of time.

I have posted the Max code for converting a timestamp and value to Motion XML (bat.keypointGen) on my website under Software: Max/MSP/Jitter tools. This code gathers the keyframe XML entries into a text object, and the contents can then be written to a text file.

There is an important trick in this code: Motion uses rational time values to give very precise time indications that are independent of FPS (I believe). For example, here is a time from a keyframe in Motion XML:

The problem is, since Max cannot be coerced to generate " marks correctly in the text file, I had to open the Max-generated text file and search-and-replace all &-marks with "-marks. Yes, not very elegant, but it does the job.

I then opened the Motion project file as text, found the data for the particular project element I wanted to provide keyframes for, and then copied/pasted the contents of that Max-generated text file into the Motion XML. I then saved the edited project file and opened it in Motion.

Why not generate the keyframes directly from the original Nodewebba data rather than going through the intermediate sequencer capture? In the sequencer I engaged in editing, including tempo manipulations, that altered the timing of the events. Reading the node data from the sequencer, then, gave me the correct timings that the visuals needed.

For my Estuaries series of audiovisual compositions, I in some cases used data from my Nodewebba software and Max not only to control music but also to control the visuals, which I was authoring in Apple Motion (v. 5.3).

I did this by capturing the needed data from Nodwebba as 14-bit pitch-bend data into the sequencer where I was authoring/editing the music. The picture below shows Max code taking the six floating-point outputs of the Nodewebba nodes, scaling it to the range of 14-bit pitch bend, and sending it out via the "from Max 2" port, with a different MIDI channel for each stream of data. My MIDI sequencer, with transport locked to control Nodewebba, received the data and recorded it on six separate tracks.

I have posted the Max code for converting a timestamp and value to Motion XML (bat.keypointGen) on my website under Software: Max/MSP/Jitter tools. This code gathers the keyframe XML entries into a text object, and the contents can then be written to a text file.

There is an important trick in this code: Motion uses rational time values to give very precise time indications that are independent of FPS (I believe). For example, here is a time from a keyframe in Motion XML:

value: <time>3937280 153600 1 0</time>To explain this, it is probably best for me to just quote Apple's Darrin Cardini answering my query to the ProAppsDev support list, where I was trying to understand the 2831360 153600 1 0 given for a keyframe at frame 554 in a 30 fps project:

The times you’re seeing are rational times. The first 2 numbers are a numerator and denominator. The OS has a rational time type named CMTime, defined in the CoreMedia.framework headers (see CMTime.h for details). So the value you list below is 2831360 / 153600 = 18.433.. seconds. 554 frames at 30fps = 18.46666666666667. The difference between those is 0.03… or 1/30th of a second, or 1 frame. That’s because frame numbering starts at 1, but time starts at 0, I think, so they’ll always differ by 1 frame time.

The extra 1 and 0 you see after the numbers in the time are also described in the CMTime structure. The 1 is a flag that says the structure is valid, and the 0 is the epoch (or more mundanely, the loop number). For most purposes you will want to keep these values at 1 and 0, respectively.

To generate one of these times yourself, you’ll want to pick a timebase (denominator) that makes your life easier. Let’s say you have a project that is 1080p30. You could just use 30 for the denominator, and each frame would be addressed as 0 / 30, 1 / 30, 2 / 30, etc. However, as soon as you need to deal with any footage or projects at a different frame rate than 30, you’ll run into problems. (What happens when someone drops a 30fps piece of footage into a 24fps project? Or vice-versa?) So finding a common denominator among the time bases is useful. For 24, 25, 30, 50, and 60, the timebase of 600 works quite nicely, as you can address individual frames in all of those frame rates as integer fractions with a denominator of 600. 1 frame at 24 fps = 25/600. 1 frame at 25fps = 24/600. 1 frame at 30 fps = 20/600th, etc.

I followed the example of what my Motion project was generating, and used 153600 as the denominator.

The problem is, since Max cannot be coerced to generate " marks correctly in the text file, I had to open the Max-generated text file and search-and-replace all &-marks with "-marks. Yes, not very elegant, but it does the job.

I then opened the Motion project file as text, found the data for the particular project element I wanted to provide keyframes for, and then copied/pasted the contents of that Max-generated text file into the Motion XML. I then saved the edited project file and opened it in Motion.

Why not generate the keyframes directly from the original Nodewebba data rather than going through the intermediate sequencer capture? In the sequencer I engaged in editing, including tempo manipulations, that altered the timing of the events. Reading the node data from the sequencer, then, gave me the correct timings that the visuals needed.

Tuesday, February 26, 2019

"Estuaries 3" Award from Madatac X

I am honored to share that Estuaries 3 has just received the "Best Audiovisual" Award from MADATAC X competition in Madrid, Feb 24, 2019.

Monday, February 18, 2019

"Estuaries 3" screening in Tallinn

The Composition and Improvisational Performing Art Department of the Estonian Academy of Music and Theatre presents Festival ComMuTe in April. This will include a screening of Estuaries 3 at Cinema Soprus on April 28 at 19:00.

Subscribe to:

Posts (Atom)